Machine Learning Archive

Understanding The Exploding and Vanishing Gradients Problem

On October 31, 2021 In Deep Learning, Machine Learning

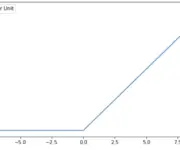

In this post, we develop an understanding of why gradients can vanish or explode when training deep neural networks. Furthermore, we look at some strategies for avoiding exploding and vanishing gradients. The vanishing gradient problem describes a situation encountered in the training of neural networks where the gradients used to update the weights shrink

Dropout Regularization in Neural Networks: How it Works and When to Use It

On October 27, 2021 In Deep Learning, Machine Learning

In this post, we will introduce dropout regularization for neural networks. We first look at the background and motivation for introducing dropout, followed by an explanation of how dropout works conceptually and how to implement it in TensorFlow. Lastly, we briefly discuss when dropout is appropriate. Dropout regularization is a technique to prevent neural

Weight Decay in Neural Networks

On October 16, 2021 In Deep Learning, Machine Learning

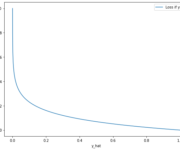

What is Weight Decay Weight decay is a regularization technique in deep learning. Weight decay works by adding a penalty term to the cost function of a neural network which has the effect of shrinking the weights during backpropagation. This helps prevent the network from overfitting the training data as well as the exploding

Feature Scaling and Data Normalization for Deep Learning

On October 5, 2021 In Deep Learning, Machine Learning, None

Before training a neural network, there are several things we should do to prepare our data for learning. Normalizing the data by performing some kind of feature scaling is a step that can dramatically boost the performance of your neural network. In this post, we look at the most common methods for normalizing data

An Introduction to Neural Network Loss Functions

On September 28, 2021 In Deep Learning, Machine Learning

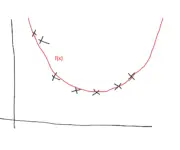

This post introduces the most common loss functions used in deep learning. The loss function in a neural network quantifies the difference between the expected outcome and the outcome produced by the machine learning model. From the loss function, we can derive the gradients which are used to update the weights. The average over

Understanding Basic Neural Network Layers and Architecture

On September 21, 2021 In Deep Learning, Machine Learning

This post will introduce the basic architecture of a neural network and explain how input layers, hidden layers, and output layers work. We will discuss common considerations when architecting deep neural networks, such as the number of hidden layers, the number of units in a layer, and which activation functions to use. In our

Understanding Backpropagation With Gradient Descent

On September 13, 2021 In Deep Learning, Machine Learning

In this post, we develop a thorough understanding of the backpropagation algorithm and how it helps a neural network learn new information. After a conceptual overview of what backpropagation aims to achieve, we go through a brief recap of the relevant concepts from calculus. Next, we perform a step-by-step walkthrough of backpropagation using an

How do Neural Networks Learn

On September 3, 2021 In Deep Learning, Machine Learning

In this post, we develop an understanding of how neural networks learn new information. Neural networks learn by propagating information through one or more layers of neurons. Each neuron processes information using a non-linear activation function. Outputs are gradually nudged towards the expected outcome by combining input information with a set of weights that

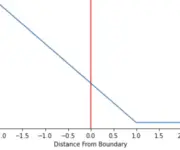

Understanding Hinge Loss and the SVM Cost Function

On August 22, 2021 In Classical Machine Learning, Machine Learning, None

In this post, we develop an understanding of the hinge loss and how it is used in the cost function of support vector machines. Hinge Loss The hinge loss is a specific type of cost function that incorporates a margin or distance from the classification boundary into the cost calculation. Even if new observations

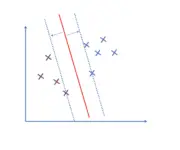

What is a Support Vector?

On August 17, 2021 In Classical Machine Learning, Machine Learning

In this post, we will develop an understanding of support vectors, discuss why we need them, how to construct them, and how they fit into the optimization objective of support vector machines. A support vector machine classifies observations by constructing a hyperplane that separates these observations. Support vectors are observations that lie on the